I will be at ReCon 16 in Edinburgh (hashtag #ReCon_16), the second ReCon event I've attended (see Thoughts on ReCon 15: DOIs, GitHub, ORCID, altmetric, and transitive credit). For the hack day that follows I've put together some instructions for a way to glue together annotations made by multiple people using hypothes.is. It works by using IFTTT to read a user's annotation stream (i.e., the annotations they've made) and then post those to a CouchDB database hosted by Cloudant.

I will be at ReCon 16 in Edinburgh (hashtag #ReCon_16), the second ReCon event I've attended (see Thoughts on ReCon 15: DOIs, GitHub, ORCID, altmetric, and transitive credit). For the hack day that follows I've put together some instructions for a way to glue together annotations made by multiple people using hypothes.is. It works by using IFTTT to read a user's annotation stream (i.e., the annotations they've made) and then post those to a CouchDB database hosted by Cloudant.

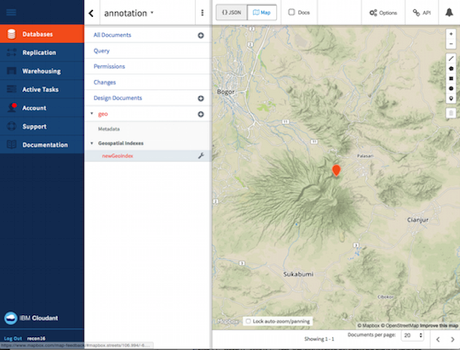

Why, you might ask? Well, I'm interested in using hypothes.is to make machine-readable annotations on papers. For example, we could select a pair of geographic co-ordinates (latitude and longitude) in a paper, tag it "geo", then have a tool that takes that annotation, converts it to a pair of decimal numbers and renders it on a map.

Or we could be reading a paper and the literature cited lacks links to the cited literature (i.e., there are no DOIs). We could add those by selecting the reference, pasted in the DOI as the annotation, and tagging it "cites". If we aggregate all those annotations then we could write a query that lists all the DOIs of the cited literature (i.e., it builds a small part of the citation graph).

By aggregating across multiple users we effectively crowd source the annotation problem, but in a way that we can still collect those annotations. For this hack I'm going to automate this collection by enabling each user to create an IFTTT recipe that feeds their annotations into the database (they can switch this feature off at any time by switching off the recipe).

Manual annotation is not scalable, but it does enable us to explore different ways to annotate the literature, and what sort of things people may be interested in. For example, we could flag scientific names, great numbers, localities, specimens, concepts, people, etc. We could explore what degree of post-processing would be needed to make the annotations computable (e.g., converting 8°07′45.73″S, 63°42′09.64″W' into decimal latitude and longitude).

If this project works I hope to learn something about people want to extract from the literature, and to what extent having a database of annotations can provide useful information. This will also help inform my thinking about automated annotation, which I've explored in Hypothes.is revisited: annotating articles in BioStor.